Unlock strategies to enhance content visibility in AI search for SaaS. Learn how to get cited and boost your traffic effectively!

TL;DR:

- Traditional SEO focuses on rankings, but AI visibility depends on content being cited in responses.

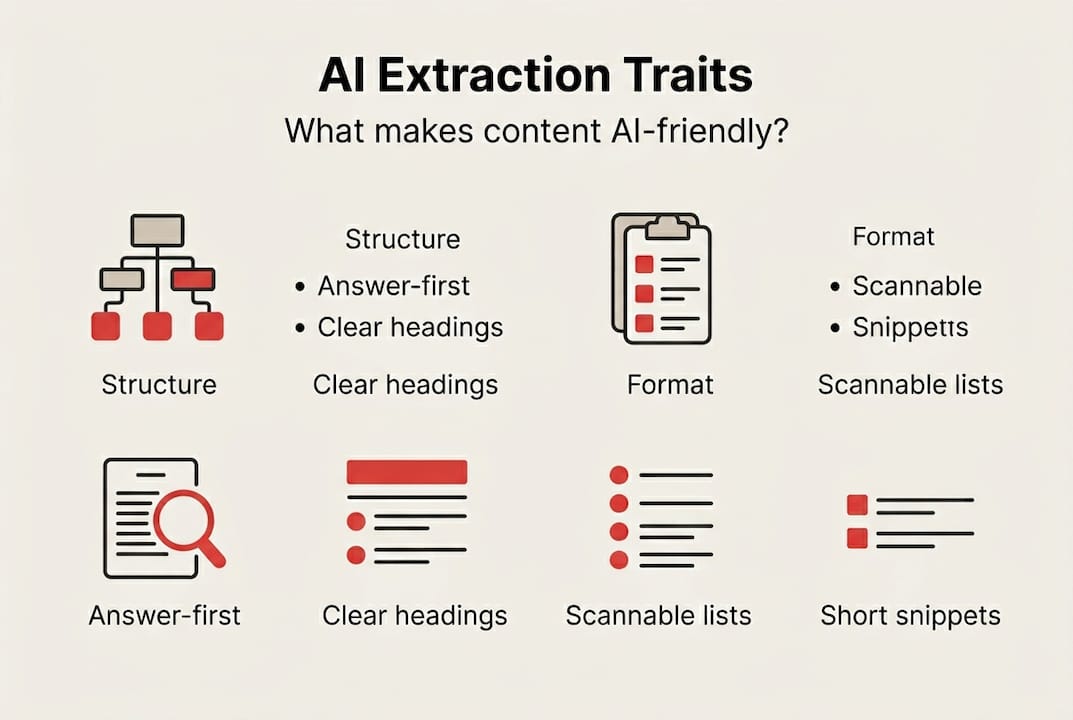

- Content must be structured with direct answers, question-based headings, and scannable formats to be extractable.

- Monitoring AI citations requires multiple prompt runs and long-term tracking to recognize patterns.

Your content is ranking on page one of Google. Traffic is still dropping. Sound familiar? That’s the new reality for SaaS marketing teams in 2026. The shift isn’t subtle. AI search engines like ChatGPT, Perplexity, and Claude are pulling answers directly from content and serving them without ever sending a click. SEO tactics are necessary but not sufficient anymore. You need to be cited inside AI-generated answers, not just ranked somewhere below them. This guide shows you exactly how to get there.

Key Takeaways

| Point | Details |

|---|---|

| Optimize for extraction | Focus on answer-first structures, question-mirroring headings, and scannable formats to ensure AI engines can cite your content. |

| Track with multi-run metrics | Measure visibility and citations over several prompts and across time, since AI search results are volatile. |

| Prioritize answerability over ranking | Legacy SEO helps, but your content must be engineered to be used inside AI-generated results, not just found in search listings. |

| Adapt team processes early | SaaS brands that transition now to extractable, purposeful content will dominate future AI discovery. |

Why AI search visibility requires different strategies

Here’s the core problem. Traditional SEO is built around a simple model: earn backlinks, target keywords, get ranked, get clicked. That model assumes a human is scanning a list of blue links and choosing one. AI search breaks that assumption completely.

When someone asks Perplexity “what’s the best SaaS onboarding tool,” the engine doesn’t return a list. It returns a synthesized answer, usually citing two or three sources inside the response itself. If your content isn’t one of those cited sources, you don’t exist in that interaction. It doesn’t matter that you’re ranking #1 on Google.

AI engines extract from clear, scannable content with question-mirroring headings and answer-first structure. That’s the key difference. It’s not about domain authority or keyword density anymore. It’s about whether your content is structured in a way that a language model can easily pull a clean, confident answer from.

Here’s a quick comparison so you can see the shift side by side:

Classic SEO tactics vs. AI visibility tactics

- Classic: Target high-volume keywords → AI: Mirror question phrasing in headings

- Classic: Build domain authority through backlinks → AI: Build extractability through self-contained paragraphs

- Classic: Optimize title tags and meta descriptions → AI: Lead every section with a direct answer

- Classic: Write long-form pillar content → AI: Write modular, quotable content blocks

- Classic: Focus on click-through rates → AI: Focus on citation and brand mention frequency

The content that gets extracted and cited tends to share a few clear traits. It answers the question in the first sentence. It uses structured formatting like lists and tables. It keeps paragraphs short and self-contained. Explore the SEO success factors for 2026 to see how these signals are evolving across both traditional and AI-driven discovery.

Extraction likelihood by content format

| Content format | Extraction likelihood | Why it works |

|---|---|---|

| Answer-first paragraphs | Very high | Gives AI a clean, confident quote |

| Numbered step-by-step lists | High | Easy to structure into AI responses |

| Comparison tables | High | Directly usable in synthesized answers |

| FAQ blocks | Very high | Matches query structure naturally |

| Wall-of-text explanations | Very low | Hard to extract clean answers from |

| Long narrative introductions | Low | Delays the actual answer too long |

This table isn’t theory. It reflects how language models process and prioritize content. Start thinking of your content as raw material for AI responses, not just web pages to be ranked.

How to structure and format content for maximum extraction

Now let’s get practical. Restructuring content for AI extraction doesn’t require a complete overhaul. It requires a shift in how you open every section, how you write every paragraph, and how you label every heading.

Here’s a simple step-by-step process your team can follow:

-

Lead with the answer. Every section should open with a direct, one-sentence response to the implied question. Don’t warm up. Don’t contextualize. Just answer first, then explain.

-

Rewrite your headings as questions or near-questions. Instead of “Our onboarding process,” write “How does the onboarding process work?” AI engines are tuned to match user queries, and your headings are a signal.

-

Break every explanation into short, self-contained paragraphs. Three to four sentences max. Each paragraph should be able to stand alone as a quotable block. If you need five sentences to make a point, split it into two paragraphs.

-

Add a FAQ section to every piece of content. FAQ blocks are citation drivers because they mirror the exact format of user queries. They give AI engines a ready-made extraction point.

-

Use tables and lists wherever comparisons or steps exist. Structured data is far easier for a language model to process than prose comparisons buried in paragraphs.

-

Audit your existing content for extraction blockers. Long intros, buried answers, and unbroken paragraphs are the three biggest offenders. Find them and fix them in batches.

Pro Tip: When reformatting legacy content, start with your highest-traffic pages, not your newest ones. Those pages already have some authority signal. Adding an answer-first structure and a FAQ block can dramatically increase their citation potential without starting from scratch.

Here’s what that restructuring looks like in practice:

Before and after content structure comparison

| Element | Before (SEO-focused) | After (AI-optimized) |

|---|---|---|

| Section opening | “In this section, we’ll walk you through…” | Direct one-sentence answer to the implied question |

| Heading style | “Our approach to customer success” | “How does customer success work at [Company]?” |

| Paragraph length | 6-8 sentences, dense and flowing | 3-4 sentences, self-contained and quotable |

| Supporting data | Embedded in narrative prose | Pulled into a table or callout |

| Content ending | Summary paragraph | FAQ block with 3-5 questions |

Check out the full AI-driven content visibility guide for deeper guidance on each of these elements. If you want a ready-to-use format for your team, the AI-powered content checklists make this process repeatable at scale. For SaaS-specific applications, the content visibility checklist for SaaS is worth bookmarking right now.

Measuring and monitoring your AI search visibility

Structuring for extraction is step one. Knowing whether it’s working is step two. This is where most SaaS teams get tripped up, because measuring AI search visibility is not like measuring Google rankings.

“Measurement in AI search requires probabilistic thinking. Single-run checks miss volatility. Use sustained observation windows and multiple prompt runs to get reliable visibility metrics.”

That blockquote captures the key insight. AI search results are not deterministic. The same prompt can return different citations on different runs, different days, or even different sessions. A single check that shows your brand being cited means almost nothing on its own.

Here’s a practical monitoring workflow for SaaS teams:

-

Build a prompt library. Write 15 to 25 prompts that reflect how your target buyers describe their problems. These are your measurement instruments. Keep them consistent so you can track changes over time.

-

Run each prompt multiple times per session. At minimum, three runs per prompt. Look for patterns, not perfect consistency. If your brand is cited in two of three runs, that’s a signal worth tracking.

-

Log results in a structured format. Track the date, the prompt, which AI engine you used, whether your brand was cited, and which competitors were cited. A simple spreadsheet works fine to start.

-

Establish a weekly review cadence. Don’t check daily. Daily fluctuations are noise. Weekly reviews let you spot real trends in citation frequency and brand mention patterns.

-

Test prompt variations. Small changes in how a question is phrased can shift which sources get cited. Testing variations helps you understand what content your audience’s actual queries are surfacing.

Key metrics to track across your monitoring workflow:

- Citation count per prompt run

- Brand mention frequency across all prompts

- Run diversity (how often you appear across varied phrasings)

- Observation duration (track over weeks, not days)

- Competitor citation frequency (to benchmark your relative visibility)

- Which pages are being cited (to identify your best-performing content)

The goal here is pattern recognition, not perfection. For a broader look at what this kind of tracking does for your overall program, the AI-powered marketing ROI framework is a solid reference. If you want to connect this to revenue impact, learn how to measure content performance in a way that ties visibility gains to pipeline outcomes.

Common obstacles SaaS teams face and how to fix them

Even with a solid plan, execution runs into friction. Here are the most common obstacles SaaS marketing teams hit when shifting to AI search optimization, and what to do about each one.

Pitfall 1: Prioritizing rankings over answerability.

This is the biggest one. Many teams still prioritize legacy SEO factors, running content through keyword density checks and meta tag audits while ignoring whether the content actually answers a question clearly. The fix is simple. Add an “answerability check” to your content review process. Before publishing, ask: can a reader get the core answer from the first two sentences of each section? If not, rewrite the opening.

Pitfall 2: Ignoring self-contained snippets.

Content that assumes the reader has context from the paragraph before won’t be extracted cleanly. AI engines pull isolated blocks. If your paragraph makes sense only in context of what came before it, it’s not extractable. Fix this by writing every paragraph as if it might be the only one someone reads. Give it enough context to stand alone.

Pitfall 3: Wall-of-text explanations.

This is common in technical SaaS content. The instinct is to be thorough, so teams write long, detailed paragraphs that cover every nuance. AI engines struggle to pull a clean answer from dense prose. Break it up. Use sub-bullets, numbered steps, or a table to organize complex information. Your human readers will thank you too.

Pitfall 4: Skipping FAQ blocks.

FAQ sections feel repetitive to writers who just covered the same material in the body of the article. But they serve a completely different purpose for AI engines. They’re pre-formatted extraction points. Every piece of SaaS content should have one. It takes 20 minutes to add and can meaningfully increase citation likelihood.

Pitfall 5: Publishing and moving on.

AI visibility optimization is not a one-time task. AI engines update their training data, their citation patterns shift, and competitor content improves. Teams that treat optimization as an ongoing process see compounding gains over time. Build a quarterly content refresh cycle into your workflow.

Pro Tip: To quickly spot extraction blockers in existing content, copy a section into ChatGPT and ask it to answer the main question using only that section. If it struggles or gives a vague response, your content isn’t extractable. That’s your signal to rewrite the opening and tighten the structure.

For a broader look at what SEO-friendly content looks like when it’s also built for AI extraction, that resource walks through the overlap well. If you’re working on technical SaaS pages specifically, web page optimization for SaaS covers the structural side in detail. And for the long game, sustainable web traffic growth shows how AI visibility compounds over time rather than spiking and dropping.

Why traditional SEO won’t win the next visibility war

Here’s the uncomfortable truth. Most SaaS marketing teams are still optimizing for a search experience that their buyers are using less and less. They’re chasing rankings on a platform that increasingly sends traffic to AI-generated summaries rather than to the original source. The mental model of “rank higher, get more traffic” is becoming obsolete faster than most teams realize.

The SaaS teams moving fastest right now aren’t the ones with the biggest content budgets or the strongest domain authority. They’re the ones who recognized early that being found is no longer the same as being cited. They restructured their content, not their backlink strategy. They trained their writers to lead with answers, not introductions. They built monitoring workflows around citation tracking, not position tracking.

It’s not enough to have authority or rich content. You have to actively engineer for extractability. That’s a fundamentally different skill set from what most content teams have been trained to do. It requires rethinking what “good content” looks like. Shorter. More direct. More modular. Less narrative, more utility.

The teams that adapt now will build a citation footprint that compounds. AI engines learn from patterns. If your content is consistently cited across multiple prompt variations and observation windows, that footprint grows. The teams still waiting to see how this shakes out are already behind.

Rethink your measurement too. If your weekly content review still centers on keyword rankings and organic sessions, you’re measuring the wrong thing. Start tracking modern SEO vs. AI discoverability signals in parallel. The two aren’t mutually exclusive. But one of them is growing in importance fast.

Frequently asked questions

What is AI search content visibility?

AI search content visibility means your content appears as cited answers or brand mentions within AI-generated search results, not just in ranked listings. Being cited inside the response is the actual goal, not the traditional click-through.

How can I increase the chances my content is cited by AI?

Format with direct answers, clear question-based headings, and scannable lists or tables to match AI extraction patterns. Answer-first structure and FAQ blocks consistently drive higher citation likelihood across major AI engines.

How do I measure my content’s AI search visibility?

Track citations and brand mentions over multiple prompt runs and longer time windows to account for result volatility. Multi-run prompting and sustained observation periods give you far more reliable visibility data than single checks.

Is traditional SEO still important for AI search?

SEO fundamentals still matter for discoverability, but purpose-built extractable formats are required to actually appear inside AI-generated answers. SEO is necessary but not sufficient when extractability is what drives AI citations.

About the Author

Josh AndersonCo-Founder & CEO at Rule27 Design

Operations leader and full-stack developer with 15 years of experience disrupting traditional business models. I don't just strategize, I build. From architecting operational transformations to coding the platforms that enable them, I deliver end-to-end solutions that drive real impact. My rare combination of technical expertise and strategic vision allows me to identify inefficiencies, design streamlined processes, and personally develop the technology that brings innovation to life.

View Profile