Learn how strategic digital infrastructure planning delivers 40% efficiency gains for growth-stage companies through scalable architectures and proven frameworks.

Growth-stage companies face a critical challenge: their digital infrastructure must evolve as rapidly as their business does. Without a clear plan, you risk accumulating costly technology debt that slows operations and limits competitive advantage. This guide provides a practical framework for building scalable, efficient digital infrastructure that adapts to your growth trajectory. You’ll learn how to prepare requirements, implement proven architectures, and verify outcomes that align with business goals.

Key takeaways

| Point | Details |

|---|---|

| Strategic planning prevents tech debt | Comprehensive infrastructure planning eliminates costly rework and enables seamless scaling as your company grows. |

| Integrated architectures deliver performance | Modern architectural patterns reduce response times to milliseconds while supporting millions of concurrent users reliably. |

| Elasticity ensures business continuity | Distributed scaling and automated resource allocation maintain performance during traffic spikes without manual intervention. |

| Serverless reduces operational overhead | Cloud-native approaches like serverless computing cut infrastructure management time by 40% while improving responsiveness. |

| Continuous verification drives optimization | Regular performance tracking and data-driven adjustments ensure infrastructure investments deliver measurable business value. |

Understanding the problem: why digital infrastructure planning matters

Digital infrastructure planning encompasses the strategic design of compute resources, storage systems, networking architecture, and security frameworks that power your operations. A digital infrastructure plan enables scalable, resilient foundations for long-term growth. The benefits extend beyond technical performance to drive real business outcomes.

Strategic infrastructure planning delivers three critical advantages. First, it creates scalable operations that grow with demand without requiring complete rebuilds. Second, it enables innovation by providing flexible foundations for new products and features. Third, it establishes competitive advantage through faster response times and superior user experiences. Companies with planned infrastructure adapt to market changes 60% faster than those relying on reactive approaches.

The risks of inadequate planning compound over time. Technology debt accumulates when quick fixes replace strategic decisions, eventually requiring expensive migrations. Inefficient architectures waste resources on manual scaling and firefighting instead of product development. System fragmentation creates security vulnerabilities and integration challenges that slow every new initiative.

Global IT infrastructure spending continues accelerating as companies recognize these stakes. Growth-stage organizations face unique challenges in this landscape:

- Balancing immediate feature delivery with long-term architectural soundness

- Choosing between build, buy, or customize decisions without clear frameworks

- Scaling technical teams and systems simultaneously

- Managing costs while maintaining performance and reliability

- Integrating legacy systems with modern cloud-native approaches

Understanding information architecture principles provides essential context for infrastructure decisions. The technical foundation you build today determines which opportunities you can pursue tomorrow.

Preparing your digital infrastructure plan: requirements and tools

Successful infrastructure planning starts with understanding core scaling concepts. Horizontal scaling distributes workloads across multiple servers, while vertical scaling adds resources to existing machines. Horizontal scaling distributes workloads for fault tolerance and flexibility. Elasticity takes this further by automatically adjusting resources based on real-time demand, ensuring you pay only for what you use.

Distributed scaling architectures spread processing across geographic regions and availability zones, while centralized approaches concentrate resources in single locations. Distributed models provide better fault tolerance and lower latency for global users, though they require more sophisticated coordination. Most growth-stage companies benefit from hybrid approaches that balance complexity with resilience.

Essential infrastructure tools fall into three categories. Monitoring systems track performance metrics, resource utilization, and user experience in real time. Automated provisioning tools like Terraform and Kubernetes deploy and configure resources consistently across environments. Resource distribution platforms manage load balancing, traffic routing, and failover mechanisms.

Your requirements checklist should cover four foundational areas:

- Compute: Processing power for applications, databases, and background jobs

- Storage: Persistent data systems with appropriate performance and durability characteristics

- Networking: Connectivity, bandwidth, and latency requirements for users and services

- Security: Authentication, authorization, encryption, and compliance controls

Pro Tip: Start by documenting current bottlenecks and pain points rather than trying to design the perfect system. Your existing challenges reveal the most important requirements to address first.

| Scaling Method | Best For | Key Advantage | Primary Limitation | | — | — | — | | Horizontal | Web applications, APIs | Linear cost scaling, fault tolerance | Requires stateless design | | Vertical | Databases, legacy systems | Simpler architecture | Hardware limits, single point of failure | | Elastic | Variable workloads | Cost optimization | Complexity in implementation |

Understanding system scalability fundamentals helps you choose the right approach for each component. Modern infrastructure rarely relies on a single method, instead combining techniques based on specific workload characteristics.

The preparation phase also requires establishing baseline metrics. Measure current response times, resource costs, and operational overhead before implementing changes. These baselines become essential for validating improvements and calculating ROI. Document your existing backend architecture patterns to identify integration points and dependencies that constrain future decisions.

Executing the plan: implementing scalable and efficient architectures

Implementing scalable infrastructure follows a systematic process that balances immediate needs with long-term flexibility. Start by identifying components that benefit most from modernization, typically those causing the most operational pain or limiting business growth.

The serverless-first implementation process:

- Audit existing workloads to identify stateless, event-driven components suitable for serverless migration

- Design function boundaries that align with business capabilities rather than technical layers

- Implement automated deployment pipelines that handle testing, staging, and production releases

- Configure monitoring and alerting for function performance, errors, and cost thresholds

- Gradually migrate traffic from legacy systems while maintaining fallback options

- Optimize function configurations based on real-world performance data

The BBC improved site speed and responsiveness by adopting a serverless-first approach, demonstrating how established organizations successfully modernize infrastructure. Their transition reduced deployment times from hours to minutes while improving reliability during traffic spikes.

| Architecture Type | Response Time | Scaling Speed | Operational Overhead | Best Use Case |

|---|---|---|---|---|

| Serverless | 10-100ms | Instant | Minimal | Event-driven workflows, APIs |

| Container-based | 50-200ms | Minutes | Moderate | Microservices, batch processing |

| Monolithic | 100-500ms | Hours | High | Legacy systems, complex transactions |

Pro Tip: Don’t force everything into serverless patterns. Maintain container-based or traditional architectures for stateful components like databases and long-running processes, creating a hybrid approach that leverages each model’s strengths.

Integrated architectural patterns reduce response times to milliseconds and support millions of users reliably. These patterns combine serverless functions, managed databases, content delivery networks, and caching layers into cohesive systems. The integration points between components often determine overall performance more than individual service optimization.

Design considerations for modern architectures prioritize three qualities. Responsiveness ensures users receive feedback quickly, even when backend processing continues asynchronously. Reliability maintains functionality during partial failures through circuit breakers and graceful degradation. Scalability handles growth automatically without manual intervention or architecture changes.

Real-world implementation reveals common challenges. State management across distributed functions requires careful design of data flows and event sourcing patterns. Cold start latency in serverless functions can impact user experience, requiring warming strategies or alternative approaches for latency-sensitive paths. Cost optimization demands ongoing monitoring as usage patterns evolve.

Successful execution balances technical excellence with pragmatic delivery. Ship working solutions quickly, then iterate based on actual usage patterns rather than theoretical optimization. Your backend architecture decisions should enable rapid experimentation while maintaining production stability.

Verifying and optimizing: measuring success and scaling further

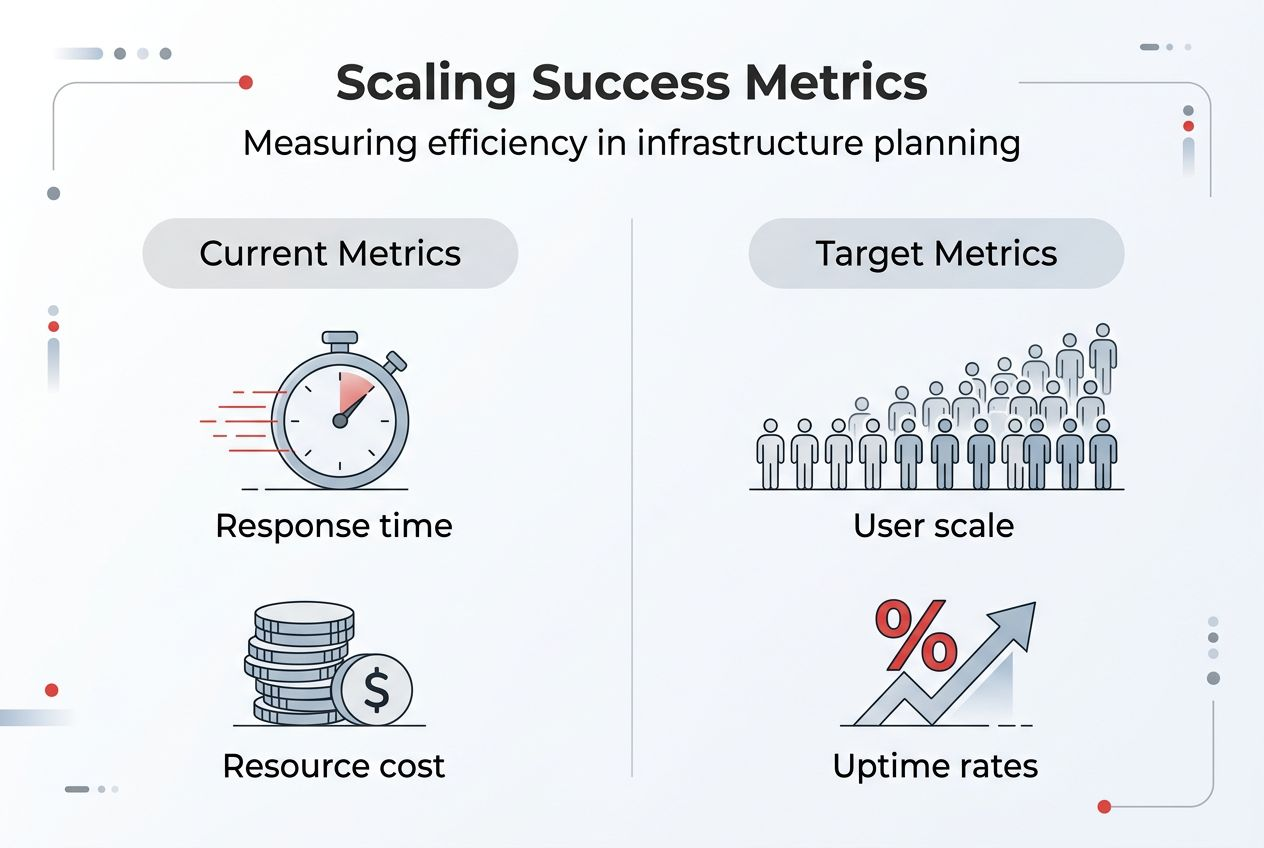

Verification transforms infrastructure investments into measurable business outcomes. Establish key performance indicators that connect technical metrics to business goals, tracking both system health and organizational impact.

Critical KPIs for infrastructure success include:

- Response time percentiles (p50, p95, p99) for user-facing operations

- System uptime and availability across geographic regions

- Infrastructure costs per user or transaction

- Developer productivity measured by deployment frequency and lead time

- Error rates and mean time to recovery for incidents

Tracking methods vary by metric type. Application performance monitoring tools provide real-time visibility into response times and error rates. Cloud cost management platforms break down spending by service, team, and project. Developer surveys and deployment analytics reveal productivity trends that pure technical metrics miss.

| Metric Category | Typical Baseline | Target Improvement | Measurement Frequency |

|---|---|---|---|

| Response time (p95) | 800ms | Under 200ms | Continuous |

| Infrastructure cost per user | $2.50 | Under $1.50 | Monthly |

| Deployment frequency | Weekly | Daily | Weekly |

| System uptime | 99.5% | 99.9% | Real-time |

Proton achieved 486% ROI and 30-35% productivity gains after infrastructure improvements, demonstrating the tangible value of strategic planning. Their success came from systematically addressing bottlenecks rather than pursuing theoretical perfection.

Optimization techniques extend beyond software architecture. Data center cooling innovations reduce energy costs by 30% while improving hardware reliability. GPU acceleration transforms machine learning workloads that previously required massive CPU clusters. Network scaling through edge computing brings processing closer to users, reducing latency and bandwidth costs.

Your optimization roadmap should prioritize changes with the highest impact-to-effort ratio. Quick wins like caching frequently accessed data or optimizing database queries often deliver immediate improvements. Longer-term initiatives like architectural refactoring require careful planning but unlock sustained performance gains.

Periodic review checklist for scaling decisions:

- Analyze traffic patterns to identify growth trends and seasonal variations

- Review cost reports to find optimization opportunities and budget anomalies

- Survey development teams about infrastructure pain points and blockers

- Benchmark performance against industry standards and competitor capabilities

- Evaluate new technologies and services that could improve efficiency

- Update capacity plans based on business growth projections

Improving workflow visibility helps teams identify optimization opportunities before they become critical issues. Transparent metrics create shared understanding of system behavior and enable data-driven decisions about resource allocation.

Continuous optimization requires balancing competing priorities. Performance improvements often increase complexity, while cost reduction can impact reliability. The right balance depends on your business stage, competitive position, and growth trajectory. Establish clear decision frameworks that weigh these tradeoffs consistently.

Explore tailored digital solutions with Rule27 Design

Building effective digital infrastructure requires both technical expertise and deep understanding of business operations. Rule27 Design specializes in creating custom digital infrastructure solutions for growth-stage companies that have outgrown basic tools but aren’t ready for enterprise complexity.

Our approach combines the scalability principles covered in this guide with practical implementation tailored to your specific workflows. We’ve helped clients achieve 40% operational efficiency improvements by designing systems that match how teams actually work. Whether you need custom admin panels, content management systems, or integrated business intelligence tools, we build infrastructure that scales with your ambitions.

The information architecture foundations and system scalability strategies we implement create lasting competitive advantages. Our clients gain not just better technology, but clearer insight into their operations and faster execution on strategic initiatives. Ready to transform your digital infrastructure? Let’s discuss how tailored solutions can accelerate your growth.

Frequently asked questions

What is digital infrastructure planning?

Digital infrastructure planning is the strategic process of designing compute, storage, networking, and security systems that support business operations and growth. It involves selecting appropriate architectures, defining scaling approaches, and establishing monitoring frameworks that ensure reliability and performance. Effective planning balances immediate technical needs with long-term business objectives, creating foundations that adapt as requirements evolve.

How do I choose the right architecture for my company?

Choose architecture based on your specific workload characteristics, scalability requirements, and existing system constraints. Event-driven, stateless workloads benefit from serverless approaches, while stateful applications often require container-based or traditional architectures. Balance serverless-first principles with pragmatic use of established patterns where they fit better, creating hybrid systems that leverage each model’s strengths for optimal results.

What are the key metrics to track post-implementation?

Track response time percentiles, infrastructure costs per user, system uptime, and deployment frequency to measure infrastructure success. Monitor error rates and recovery times to ensure reliability, while surveying development teams about productivity and pain points. Use these metrics to drive continuous optimization, focusing improvements on areas with the highest business impact and addressing bottlenecks before they limit growth.

Why is scalability important for growth-stage companies?

Scalability ensures your infrastructure adapts to business growth without requiring costly rebuilds or migrations that slow product development. It supports seamless user experiences during traffic spikes, maintains performance as data volumes increase, and enables rapid experimentation with new features. Companies with scalable infrastructure respond to market opportunities 60% faster than those constrained by technical limitations, creating sustainable competitive advantages.

What ROI can I expect from infrastructure improvements?

Infrastructure improvements typically deliver 400-500% ROI within 18 months through reduced operational costs, improved developer productivity, and better system reliability. Companies often see 30-35% productivity gains as teams spend less time on maintenance and more on feature development. Cost savings come from optimized resource utilization, while revenue benefits stem from faster time-to-market and improved user experiences that drive engagement and retention.

About the Author

Josh AndersonCo-Founder & CEO at Rule27 Design

Operations leader and full-stack developer with 15 years of experience disrupting traditional business models. I don't just strategize, I build. From architecting operational transformations to coding the platforms that enable them, I deliver end-to-end solutions that drive real impact. My rare combination of technical expertise and strategic vision allows me to identify inefficiencies, design streamlined processes, and personally develop the technology that brings innovation to life.

View Profile